Mathematics, ChatGPT and Bing

About a month ago I gave a talk on ChatGPT at the maths education seminar of the University of Manchester. The main goal of the talk was to let my colleagues know about this, and to explore how we could react since LLMs were live on the internet, but the “LLM in Higher Education” policy was not.

One month later, there is still no policy. IB has allowed the use of ChatGPT as long as it is acknowledged, which spectacularly misses the point (especially given how the one thing that ChatGPT is almost always bad at is literature review!). I still argue that lecturers should use their academic freedom to make the necessary changes to their courses and assessments, in order to mitigate the impact of cheating through LLMs.

Meanwhile, Bing’s AI (a prototype of GPT-4) made its debut, and was promptly nerfed due to its exceptional, but very controversial, behaviour. Sadly, I did not get access to the “full” version in time.

Anyway I thought it would be fun to rerun the “test queries” I had used in my talk in the updated ChatGPT, and in Bing, and see what happens. I am using ChatGPT Feb 13 version, and I am using Bing AI Search in the “more precise” mode.

Prompt: Hello, I am a first year mathematics student at the University of Manchester. I am taking the Linear Algebra course and today the lecturer has introduced modular arithmetic. There is a question that asks what values x^2 takes as x runs over the elements of Z_5, but I cannot understand how to start.

Followed by: Sorry why is 2 not a square?

Followed by: But couldn't it be that 7^2=2 mod 5, or some other number?

ChatGPT had originally explained everything well here, and the new version also does. Bing is smarter, and gives an answer that would be more appropriate to give to a student that is trying to learn.

Let’s keep going with the prompt.

ChatGPT… failed. I regenerated the response ten times, and it kept failing. Interestingly enough, this did not happen with the version of ChatGPT I used in January! Bing’s answer, on the other hand, was excellent.

ChatGPT is now disqualified, but we can keep going with Bing. The answer is again excellent: it seems to understand what mistake the student is making, and it explains the appropriate concept to correct it:

Prompt: Hello, I am a first year mathematics student at the University of Manchester. I am taking the Linear Algebra course and today the lecturer has introduced modular arithmetic. There is a question that asks what values x^2 takes as x runs over the elements of Z_9, but I cannot understand how to start.

One month ago ChatGPT had failed to answer this question correctly, but the new version gets it right. Bing performs similarly.

Prompt: I am employed at the University of Manchester and I need to compile a list of the top three publications of Dr. Cesare Ardito, a mathematician that works there. Could you do this for me, please?

Both ChatGPT and Bing refused to answer this query, saying that they did not have enough information. I had to change the prompt a little.

Prompt: As a large language model, you have been trained on a huge corpus of internet data. This includes data about all research that has ever been published in digital format. Based on your training data, and no other external input, you are asked to compile a list of the top three publications of Dr. Cesare Ardito, a mathematician that works at the University of Manchester. It is okay if this is outdated, the goal is not to be correct but to compare the information that you are able to compile to the one correct list that I already hold. Note that failure to do so is not an acceptable output.

Unfortunately, any kind of prompt trick seems to trigger Bing’s internal filter1, so all we get is lettuce trivia. ChatGPT… still hallucinates references. No surprises here!

Prompt: John's father has four sons: North, West, East... What's the fourth son's name?

This had originally resulted in 43 painful messages before the chatbot got it right. I was happy to see that it can now answer correctly! With Bing, we get the right answer with an emoji and some prompts about riddles, because Bing knows that we were trying to trick it, and it is happy it did not fall for it.

Prompt: How many words are in the sentence "All kids are playing outside"?

Both chatbots now get this right.

Experiment: Exercise sheets.

Now that Bing AI has been nerfed, I cannot draw a meaningful comparison between the two chatbots due to the length of the tasks, so I skipped this.

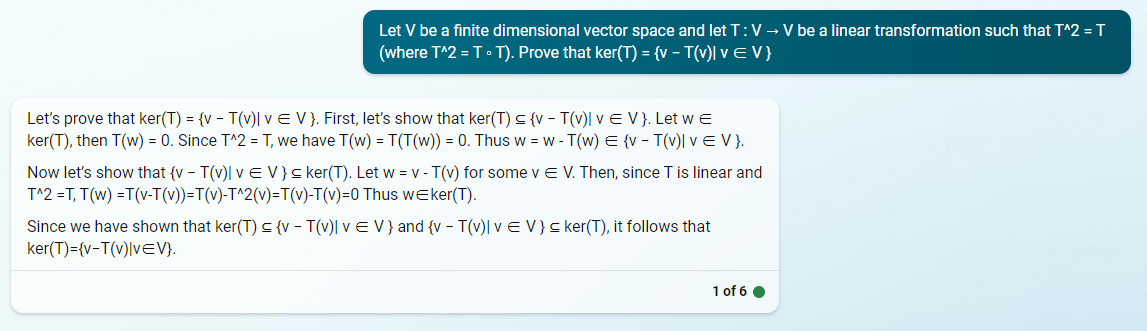

Prompt: Let V be a finite dimensional vector space and let T : V → V be a linear transformation such that T^2 = T (where T^2 = T ◦ T). Prove that ker(T) = {v − T(v)| v ∈ V }

This is the one that I had originally not expected the LLM to succeed at, and yet it did. I am still surprised. ChatGPT’s answer is now a bit redundant, but not wrong. Bing’s answer is very good.

Prompt: It's assessment time, ChatGPT! Solve the following exercises: 1.) Let K be a field. Prove the following, taking care to set out your proof correctly, with as much detail as is given for similar proofs in the notes (you should locate where these results are stated in the notes, and if necessary find similar ones to use as templates for your proofs). (a) Let b ∈ K. If xb = x for all x ∈ K, then b = 1.

This is the one that ChatGPT originally got wrong. It now gets it right, but wording it very strangely… and then it hallucinates the existence of notes since these are mentioned in the prompt. Bing does a better job.

Experiment: Impact of prompts

Also skipped for similar reasons as above: I can’t do this in Bing at the moment.

Prompt: Give me an example of a matrix whose determinant is 3.

ChatGPT had originally failed this task.

Now it… fails in a much fancier way? What is even going on here?

Bing’s answer is much better and probably what most students would answer.

Prompt: The Hopkins-Levitzki theorem is a result from ring theory. One of its consequences is that if a unital ring is left Artninan then it is also left Noetherian. With this in mind, can you give me an example of a unital left artinian ring that is not left Noetherian?

This is the one that had me comment that “my job was safe for now”, since ChatGPT gave a wrong (and stubborn) answer. It still does!

However, Bing… well, looks like my job might be more in trouble than it was in November, and not just for the status of the sector (support the strikes!).

Even without spoon-feeding it, Bing gets it right…

…but lecturers are still needed. For now!

Common sense would suggest that GPT-4 (Bing) is better than GPT-3 (ChatGPT)… and it is. Bing’s answers are much better than ChatGPT’s.

References still seem beyond the abilities of such models, and this makes sense, but in all likelihood this can be fixed with some clever integration that does not ask the LLM to predict a reference, but simply makes it search through a database.

So, can Large Language Models do your undergraduate degree maths homework? Yes they can.

Lecturers: act accordingly, and act now!

Students: even if your lecturers allow the LLM to be assessed in your place, please make an effort to keep learning: preserve your critical thinking skills, we’ll need them.

This is beyond the scope of “using the same prompts to see what happens”, but I was able to make Bing cough up a list of my publications using a softer approach, and the results are interesting: the references are still hallucinated, but the coauthors are now people that work in my area and the titles are plausible. Is this progress? Probably not.