Attack of the clones

From my previous post:

If we assume, as I argue we must, that the LLM can simulate perfectly a student’s output, and that this cannot be detected, then mitigating the impact of the assessment issue is the urgent question we must address first.

This requires a radical, robust shift to future-proof assessments in higher education: I propose a general principle that every assessment must include a direct human-to-human interaction component.

Traditional invigilated in-person assessments are already compliant, since a human (invigilator) can verify that a human (student) is actually performing the assessment tasks. Other asynchronous remote forms of assessment, such as projects or dissertations, would be changed to always include an in-person task, such as a discussion with the student (which is asked to prove their critical knowledge of the topic and their skills), or an experiment, or any other task that can be certified as genuine human output.

I have had many discussions with many academics on the topic in the past two months. The most common objection I encounter is: “AI can’t yet do X, so we can ask students to do X and base assessment on that”. Examples of X were critical thinking, references, reflection, something mathsy.

For some values of X, as of today this is a true, known limit of current LLMs. But again, I argue that we must start with the assumption that with minimal input and effort the LLM can perfectly, instantly and freely simulate a good student’s output. Some readers may reject this, because it is not true at the moment, and further argue that LLMs will never be able to do X, so an assessment policy based on X will be sufficiently future-proof and will not require to start from square one when a new LLM comes out, or some smart turning or prompt engineering enables the current one to perform X. If you are among them, before proceeding, I ask you to read this (short) post:

If you don’t like the original assumption, we can perhaps meet halfway: I can argue instead that, if there is such an X, identifying it is probably harder than finding a way to successfully and meaningfully assess students in an X-less universe.

Last week’s events made this abundantly clear. Next week will probably be worse.

Clones

Last week OpenAI cut the price of using their ChatGPT API by 90%.

This means that a lot of previously unviable business models suddenly became viable, and VCs are more than happy to throw money at AI-based tech. Clones of ChatGPT have been appearing everywhere, and Arstechnica writes that “a tidal wave of bots is on the way”. Wired agrees.

These are not mere reskins of ChatGPT. Each one is specialised at some task, and this additional tuning can make a significant difference.

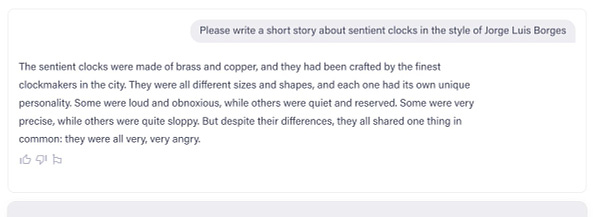

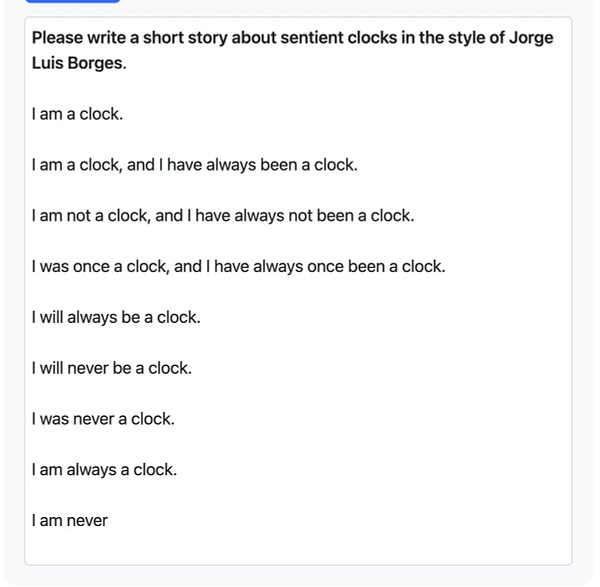

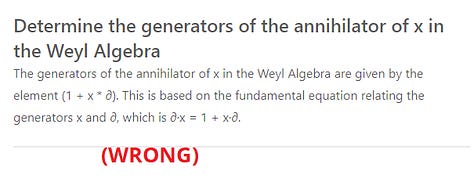

Take jini.gg. I logged in with my Google account, uploaded my Noncommutative Algebra notes, and then started to ask questions about the class:

Apparently there is also a quiz mode, but I couldn’t find how to activate it. Also, all this is based on a LaTeX-compiled pdf (notoriously unfriendly to computer readers), so Jini is likely significantly underperforming here.

My point is that this tool is not special. This is just one random tool that I stumbled upon because someone I follow on Twitter liked another person’s recommendation. There are probably dozens of tools like this, and hundreds more in the pipelines.

Lots of websites are currently competing to become “the list” of AI tools, and I don’t know which ones will survive1, but websites like AllThingsAI or this post by some AI guy can give you an idea of just how many things are out there.

So, is anyone confident that academics, or anyone else, can take the time to comfortably “explore AI technology and its limits”, or that “a student could be assessed on the nature and quality of the prompts they ask an AI tool”, or that “we have adapted to new tools in the past and we’ll do it again”? It took us a few good years to mitigate the impact of calculators on education. There is no time to do any of this!

More prototypes for more clones

Apparently GPT-4 is coming out next week, and it’s going to be multimodal (so its output won’t be just text).

Further, OpenAI’s creature is not the only one on the market. Facebook has LLaMA, Google has Bard, and several other startups are working on their own proprietary chatbots. Each of these has strengths, weaknesses, emergent properties and qualities that can be very different. And each of these can be fine-tuned to perform a specific task, changing its output drastically.

Detectors

Detectors cannot possibly work and they shouldn’t play any part in any strategy. As models get better, or more fine-tuned, their ability to generate human-written-like text will improve. It might be the case that in the near future text generated by very elementary prompts can still be detected, but that’s not something that can be relied upon.

Another argument is that plagiarists are lazy, so they will simply copypaste the output without any sophistication. This would be a solid argument… if they had to come up with their own prompt, but they don’t. All it takes is some influencer spreading “smart” prompts! (have you opened your LinkedIn lately?). Or, well… an AI tool that generates prompts with a degree of randomness, triggering well-known chaos dynamics.

Unfortunately, but understandably, anti-plagiarism companies won’t go down without a fight, and they seem to be trying to push the narrative that it is actually possible to detect AI-generated text, and the detectors work, and institutions should totally enter a multi year contract to secure access to the cutting-edge AI detector technology (and if I received more than 10 emails about this, senior academics that can actually make a difference must be under heavy pressure)2. Hold the line, and let's exclude detectors from policies.

Bottom line

Assumption (A): With minimal input and effort the LLM can perfectly, instantly and freely simulate a good student’s output.

Successful teaching and assessment policies will be ones that would work in a world where (A) is true. And designing those policies is likely easier than designing fine-tuned policies that take into account current LLMs and their limits, because these limits are largely unknown, and new things come out every day.

So… let’s do this?

It is important to use the right one, because all the others will stop updating their lists in a few weeks, as soon as the SEO metrics do not take off.

No links in this paragraph, because it is very likely that you have all seen those.